It’s that time of the year. You’ve miraculously managed to get your classroom set up in a couple of days and memorized the names of all your new students. (You keep getting Lucas D. and Lucas F. confused, but you’re almost there.)

And, now it’s time for you to administer your diagnostic reading assessment. F&P. STEP. MAP. DRP. ERDA. DIBELS. So many A-C-R-O-N-Y-M-S.

This means hours of instructional time spent testing while you try to keep the rest of your students occupied with independent work. You’ve made all the photocopies. You’ve taken students’ running records in the hallway on your lunch break and prep. You’ve scribbled down notes as fast as you could as a student answers a reading comprehension question. Now you have data and lots of it. Piles and piles of it.

The moment you’ve completed your diagnostic reading assessments can be bittersweet. On the one hand, you’ve completed your first monumental task of the school year. On the other, you have the painstaking task of organizing and interpreting all of the data, often leading to the realization that your students are more behind grade level than you anticipated.

Now what?

Diagnostic assessments can provide a wealth of information if you’re looking in the right place.

Here are 4 things to keep in mind:

1. Reading diagnostics are important for all grade levels.

Diagnostic assessments are pivotal to identify students who need extra support due to gaps in reading instruction, reading disabilities, vision problems, etc. This seems like sort of a no-brainer, but often the purpose and priority with which a diagnostic test should be handled gets overshadowed by the millions of other things we have to do as teachers, especially in the older grades. Rafael Heller Ph.D. [1]. phrased it well: “If doctors performed surgery without examining their patients first, they’d be tried for malpractice. Why should it be considered any less scandalous for teachers to provide instruction without first assessing kids’ knowledge and skills?”

As students move past elementary school, the focus often switches from diagnostic assessments to summative assessments designed to see if they’ve learned what has been taught. Teachers are able to see that students are struggling with literacy but it’s hard to determine the underlying reason if students haven’t been properly assessed before any instruction is given.

2. Kids may need to build stamina, even for a diagnostic test.

Students may not yet have the stamina and persistence necessary to work through the challenges they’ll encounter in the diagnostic assessment you are administering. Some diagnostics often contain multiple parts, which can leave students feeling mentally and physically exhausted, not giving you the truest assessment of their reading ability.

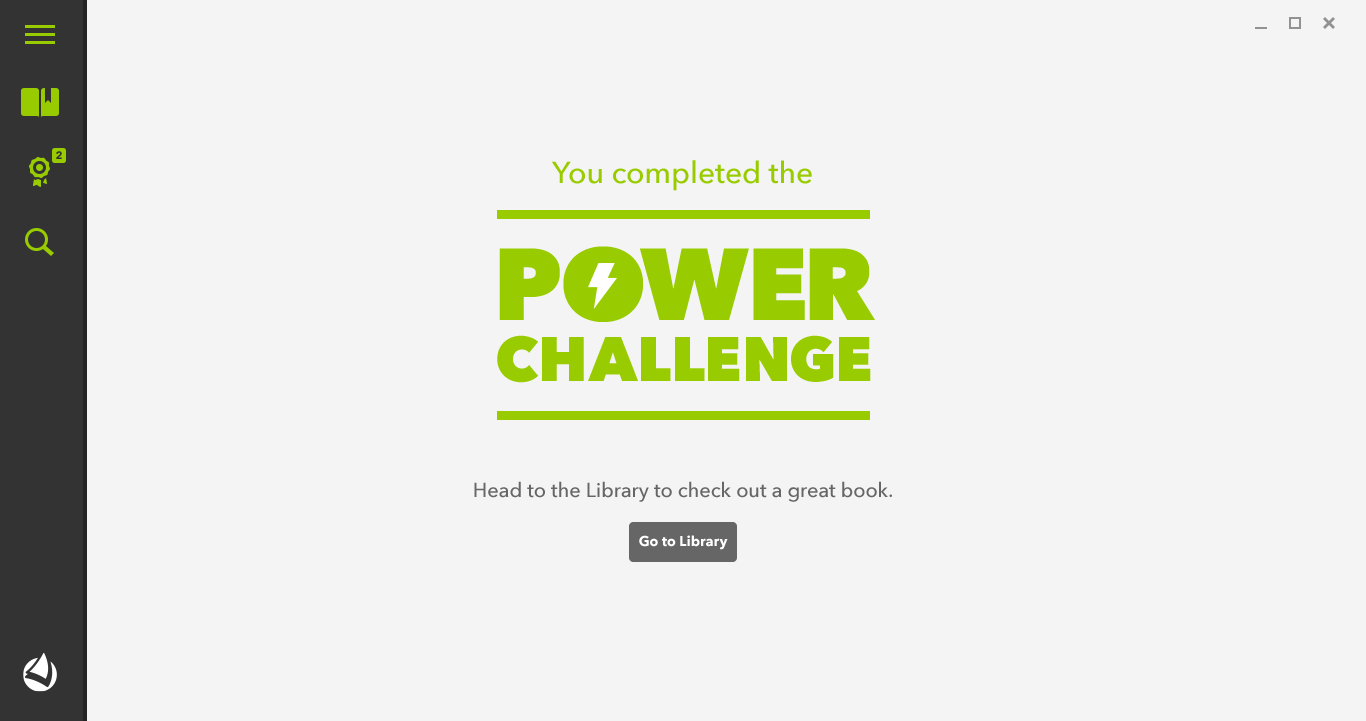

Arielle Walker, a teacher at Denver Public Schools, experienced this when she introduced the Power Challenge™, LightSail’s diagnostic reading assessment, to her class. “The Power Challenge is much more aligned with what they’re expected to do for the PARCC Assessment, unlike diagnostic tests I’ve given in the past. My students weren’t used to this and became careless as they read.”

Walker’s students performed much lower than she expected on the Power Challenge, which she attributes to a lack of required stamina. With high-stakes assessments becoming the norm, stamina is a skill that students need to develop now more than ever. More importantly, students need to develop the habit of being able to sit still and read for an extended period of time as their instruction moves from “reading to understand” to “reading to learn” in higher grades.

3. A student’s familiarity with the diagnostic test can skew your data for better or for worse.

Once, while administering the STEP (Strategic Teaching and Evaluation of Progress) test to my students, I was having a comprehension conversation with a new student, Emon. I was using universal prompts like “Tell me more” or “Why do you think that?” to get more information. All of my other students who had been STEPped before had become accustomed to these prompts from our guided reading instruction and knew they meant they should be elaborating on their answer.

Emon, on the other hand, had no idea and simply repeated his answer over and over again. While at first I was frustrated because it felt like the inference was on the tip of his tongue, I realized this was the truest representation of what he knew. My other students had become masters at taking STEP tests. They were so used to these universal prompts that I was unintentionally leading them to the right answer — one they would not have been able to get to on their own.

Walker had a similar experience. The lower scores reflected that her students were struggling, but she realized that the format of the test, not the content itself, that was causing them to underperform on the Power Challenge. So the scores did in fact reflect her students’ reading habits – just different ones than she was used to assessing. It ultimately pushed her to look at the whole picture, not just assessment scores.

4. The qualitative data you collect while administering a diagnostic assessment can be just as important as the quantitative data.

Many diagnostic assessments provide space for teachers to provide some anecdotal comments and analyses about reading behaviors or comprehension. Does the student problem-solve, self-monitor, or self-correct? Do they read with fluency or expression? Do they add up clues to derive meaning? Do they move beyond literal understanding? Are they nervous? Where in the retell do they struggle? Unfortunately, these pages are often left blank. I’ve definitely been guilty of this…haven’t you? In a rush to assess everyone before the deadline, we risk losing the opportunity to collect qualitative data that allows us to assess the whole reader. Don’t miss out on this opportunity!

There may be an easier way to collect that valuable reading data!

New can often be scary, but new can also be better. There are a number of digital tools, including LightSail, that can make collecting diagnostic data easier.

But, if you’re anything like me, you’re a bit of a control freak and letting a digital tool access your students can be nerve-racking if you’ve never done it before. Let’s be real, it can be daunting even if you do consider yourself to be a “tech-savvy” teacher. You’re not alone, as Walker noted, “It’s a tremendous help to have LightSail’s data, but it took me a while to completely trust the accuracy of a digital tool.”

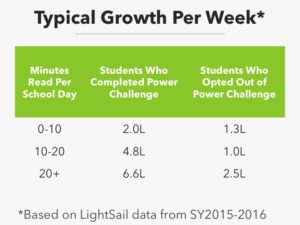

However, having a digital tool that administers, grades, and then does the quantitative analysis, not only gives you reliable data, but can free up additional time to focus on stamina and other qualitative reading data. Need more proof? Take a look at the data table.

LightSail offers the Power Challenge in order to provide teachers and students with a reliable baseline measure for every student. This baseline allows LightSail to immediately generate a personalized library of Power Texts™, books within each student’s zone of proximal development (ZPD). The Power Texts are 100L above and below a student’s estimated reading level, which research supports [2] is the appropriate difficulty level needed for them to maximize progress because ”it is the zone the child is both challenged and presented new vocabulary, but also in which there are enough context clues that the child can construct meaning without being frustrated.”

Students are pushed to read Power Texts immediately, minimizing the time they spend reading books that are way below students’ reading level, which can stifle progress [3] by not building student vocabulary or familiarity with complex sentence structures. Time spent reading texts that are too difficult, where students are spending more time decoding than making meaning, [4] is also minimized.

Students are pushed to alternate between Power Texts and free choice books in order find a balance between fostering a love of reading and being appropriately challenged. As students continue to read, they are consistently assessed through Cloze questions and text difficulty adapts to best meet their needs.

As you can see, there is a good deal of important factors that you may have not considered when thinking about your reading diagnostics, which is no surprise because as teachers we spend a great deal of time learning.

[1] Heller, R. “Make It a Priority to Assess Students’ Literacy Skills” Web log post. Retrieved October 12, 2016, from http://www.adlit.org/adlit_101/improving_literacy_instruction_in_your_school/make_it_a_priority_to_assess_students_literacy_skills/

[2] Critical Thinking and Literature-Based Reading. Report. (1997) Madison, WI: The Institute for Academic Excellence. Retrieved October 12, 2016 from http://www.eric.ed.gov/ERICWebPortal/custom/portlets/recordDetails/detailmini.jsp_nfpb=true&_&ERICExtSearch_SearchValue_0=ED421688&ERICExtSearch_SearchType_0=no&accno=ED421688

[3] A Practical Guide to Selecting “Just Right” Books for Independent Reading. Retrieved October 12, 2015 from https://www.professionalpractice.org/about-us/selecting_just_right_books/

[4] Mesmer, H. A. E. (2009). Textual scaffolds for developing fluency in beginning readers: Accuracy and reading rate in qualitatively leveled and decodable text. Literacy Research and Instruction, 49(1), 20-39.

Posted on 10.Oct.16 in Literacy Strategies